A key component of the model used was the Dirichlet process which was used so that the number of clusters does not need to be set a priori. Instead, the number of clustering in a run of the simulation is constantly changing and the weight of each cluster component (as well as its parameters) are all drawn from the Dirichlet process. This process can be constructed in a number of ways but one of the most intuitive is the Chinese restaurant process.

the Chinese restaurant process

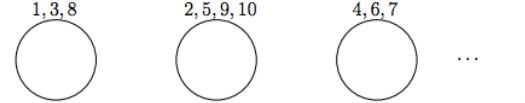

Imagine a Chinese restaurant with an infinite number of circular tables, each of which can seat an infinite number of customers. Each time another customer enters the restaurant they choose a table at which to sit. The first customer simply sits at a random table. All subsequent customers either join a currently occupied table, or start a new table. In this metaphor, each customer represents a data point and each table represents a cluster component.

Applied to this model

The Dirichlet process is popular across a range of applications of Bayesian analysis in statistics and machine learning. Some of the most popular are:

In this report, the flexible nature of the Dirichlet process is utilised in an unsupervised classification model. More precisely, the Dirichlet process will be used as a prior over the distribution of weights of the mixture components in a Gaussian mixture model.

The use of the Dirichlet process in a mixture model is also referred to as an infinite mixture model because, even if data exhibits a finite number of components, adding any additional data can exhibit previously unseen components. In other words, the number of clusters increases as the size of the data set increases. This approach is also known as non-parametric because there is not a fixed number of parameters and that the number of parameters can grow with the data.

- Bayesian model validation,

- density estimation,

- and clustering via mixture models.

In this report, the flexible nature of the Dirichlet process is utilised in an unsupervised classification model. More precisely, the Dirichlet process will be used as a prior over the distribution of weights of the mixture components in a Gaussian mixture model.

The use of the Dirichlet process in a mixture model is also referred to as an infinite mixture model because, even if data exhibits a finite number of components, adding any additional data can exhibit previously unseen components. In other words, the number of clusters increases as the size of the data set increases. This approach is also known as non-parametric because there is not a fixed number of parameters and that the number of parameters can grow with the data.